Summary: For years, AI meeting tools produced walls of text with no indication of who said what. Speaker diarization — the task of automatically segmenting audio by speaker — was the bottleneck. Early systems failed on real meetings because voices overlap, microphones shift, and participants join and leave. Modern diarization works because three things finally came together: deep neural embeddings, larger training data, and persistent speaker profiles. CraftNote combines these with 94.92% transcription accuracy and Speaker Memory that remembers voices across every future meeting automatically.

The "Who Said What" Problem

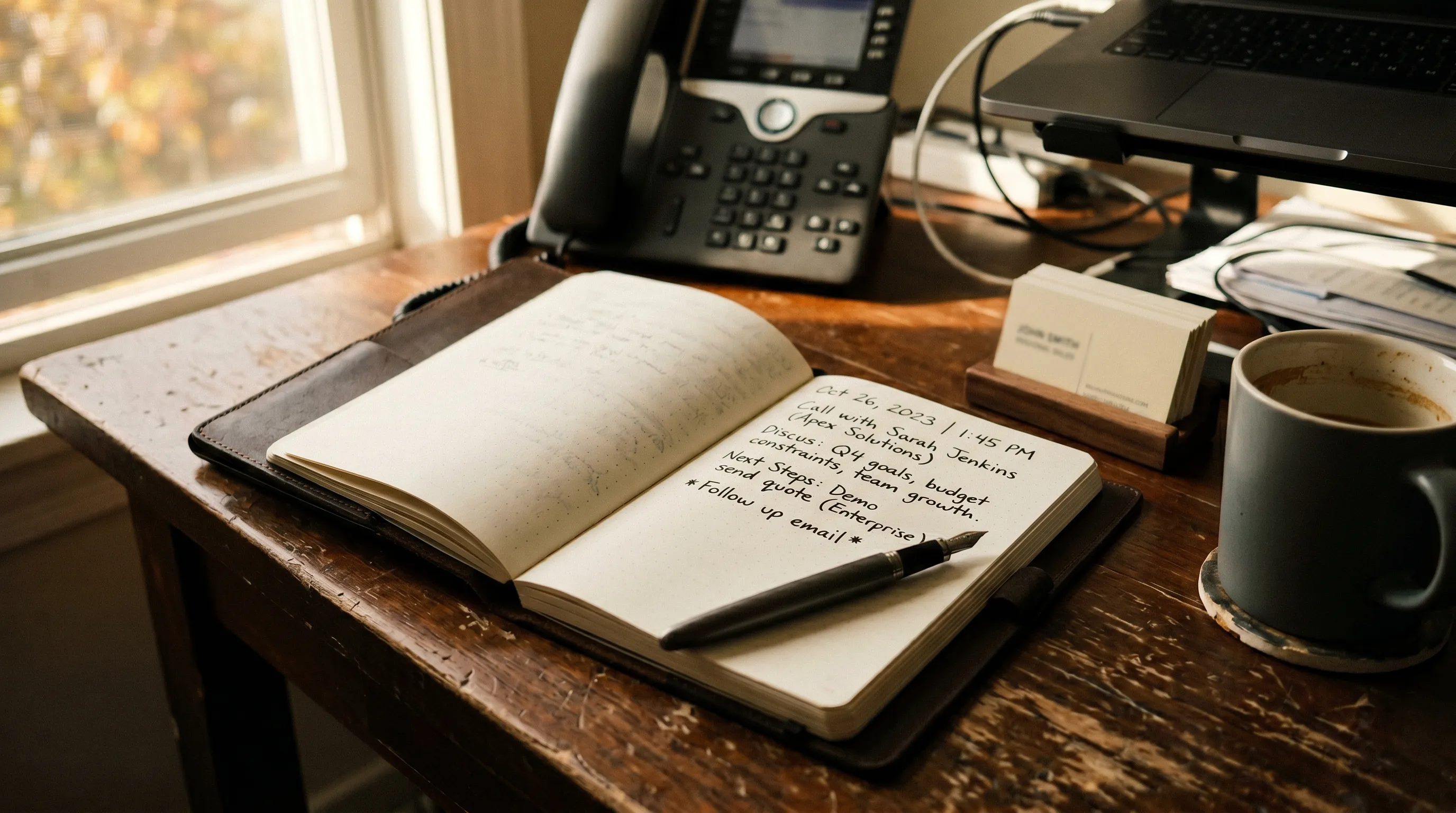

Imagine reading a three-thousand-word transcript of a meeting and having no idea who said anything. Every sentence blurs into every other sentence. Decisions float in the air with no owner. Action items exist in a vacuum. This was the state of automatic meeting transcription as recently as a few years ago.

Transcription itself was never the hardest problem. Turning sound waves into text was largely solved by Whisper-class models trained on vast multilingual datasets. The harder problem was attribution. Who exactly said "we need to ship this by Friday"? The project manager making a commitment, or the engineer pushing back sarcastically? Without speaker labels, the transcript is half the picture.

Speaker diarization is the technical name for the part that answers this question. It is the process of segmenting an audio stream into chunks, grouping chunks that belong to the same speaker, and assigning each group a label. Good diarization turns an undifferentiated transcript into a conversation you can actually follow.

Why Diarization Failed for So Long

Diarization looks easy until you try it on a real meeting. Four specific problems broke earlier systems.

Overlapping speech. People talk over each other constantly, especially in engaged conversations. Traditional systems assumed one speaker at a time. When two people spoke simultaneously, the system would either pick one arbitrarily or produce garbled attribution for both.

Microphone variability. In a video call, one participant has a studio microphone, another is using laptop speakers in a coffee shop, and a third is on a phone. Voice characteristics that help the model distinguish speakers get distorted differently in each case. Early systems trained on clean data collapsed when they met this reality.

Similar voices. Two thirty-year-old men from the same country with similar education often have voices that are objectively close in acoustic space. Early systems confused them constantly. You would see "Speaker 1" and "Speaker 2" swap roles halfway through the transcript with no explanation.

Changing participant counts. Meetings are not static. Someone joins twenty minutes in. Someone else leaves. A new voice appears. Systems that assumed a fixed number of speakers could not handle this gracefully, and the result was attribution that drifted out of sync as the meeting progressed.

Any one of these problems degraded accuracy. Stacked together, they made diarization on real meetings a frustrating experience for years.

How Modern Diarization Actually Works

Three advances closed the gap.

1. Deep Neural Embeddings

Instead of analyzing raw acoustic features directly, modern systems pass audio through a deep neural network trained on millions of voices. The network produces a compact vector — an embedding — that captures the essence of the voice in a form robust to microphone quality, background noise, and minor acoustic variation. Two embeddings from the same speaker land close together in the embedding space even across different recording conditions. Two embeddings from different speakers land far apart, even if those speakers sound superficially similar.

2. Better Segmentation

Older systems cut the audio into fixed chunks and tried to label each chunk. Modern systems use voice activity detection and overlap-aware segmentation, identifying precisely when one speaker starts and another stops, and detecting when two speakers overlap. This produces cleaner input for the embedding stage, which in turn produces cleaner clustering.

3. Clustering With Uncertainty

Once embeddings are generated for every segment, the system clusters similar embeddings to identify distinct speakers. Modern clustering methods handle uncertainty well: if two speakers are acoustically close, the system can flag the ambiguity rather than commit confidently to a wrong answer.

Stack these three advances and you get diarization that works on real meetings, in noisy rooms, with overlapping speech, across varying microphones. Accuracy in independent benchmark testing of CraftNote reached 94.92% across six different audio types.

Speaker Memory: Beyond Single-Meeting Diarization

Solving diarization within a single meeting is only half the prize. The bigger prize is recognizing the same speakers across every meeting they attend.

Most diarization systems are stateless. They cluster speakers within one recording and forget everything when the meeting ends. Next week, the same four people meet again, and the system starts from scratch, producing "Speaker 1" and "Speaker 2" labels that do not match the previous week.

CraftNote's Speaker Memory changes this. The system maintains voice profiles across all your meetings permanently. Once you identify a speaker once — "This is Sarah" — the profile is stored, and Sarah is recognised automatically in every future recording, with no retraining needed. Over time, the profile improves as more voice samples are captured.

For teams with recurring meetings, the practical impact is enormous. You stop doing manual labelling work that used to have to happen at the top of every transcript. Reports, standups, board meetings, and 1-on-1s all arrive with the correct names attached from the moment the transcript is generated.

CraftNote is currently the only major AI note taker with persistent Speaker Memory across all meetings.

What Affects Accuracy in the Real World

Diarization accuracy in practice depends on factors the system does not control. If you care about getting the best possible results, these are the levers.

Audio quality. The biggest single factor. A conference-room omnidirectional mic captures everyone cleanly. Laptop speakers with the camera too far away produce audio that degrades every downstream step.

Background noise. Quiet rooms produce cleaner embeddings. Coffee shops, open offices, and construction outside the window introduce variability that the embedding model has to filter through.

Overlapping speech. Even modern systems struggle with heavy overlap. If your meetings are polite and people mostly wait their turn, diarization accuracy will be higher. Chaotic brainstorming sessions are harder.

Speaker distinctiveness. Voices that differ in pitch, accent, and cadence are easier to separate. Two speakers with very similar acoustic profiles may get conflated occasionally.

Sample length. Short utterances give the system less to work with. If a participant only says "yes" twice in an hour, reliably identifying them is harder than if they speak in full sentences.

For Speaker Memory specifically, labeling consistently — always using the same name for the same person — lets the profile accumulate cleanly over time.

How CraftNote Handles It

- 94.92% accuracy. CraftNote topped independent benchmark testing with 94.92% transcription accuracy across six different audio types, approaching human transcription quality.

- Persistent Speaker Memory. Once a voice is labeled, CraftNote recognises that person automatically in every future meeting. The only major AI note taker with this capability.

- 100+ languages. Diarization and transcription both operate across over 100 languages, with automatic language detection.

- Bot-free recording. CraftNote captures audio directly from your device microphone and system audio, so no bot appears in the meeting. Works with every video conferencing platform because it operates at the system level.

- Offline support. The only major AI note taker that works completely offline. Recordings made without connectivity are transcribed and diarized when the device reconnects.

- Privacy-first. Data is stored on EU servers in Frankfurt with GDPR compliance. Recordings are encrypted with AES-256 in transit and at rest. Audio is deleted after transcription, and voice data is used only for speaker recognition.

Diarization is one of those technologies that people only notice when it fails. When it works, a transcript is just a conversation you can read. When it does not, the whole document is unusable. Getting it right turns AI meeting notes from a novelty into an actual tool.

FAQs

Is speaker diarization the same as speaker identification?

Closely related but not identical. Diarization segments audio by speaker and clusters segments, producing generic labels like "Speaker 1". Identification goes further by mapping those labels to known names. Speaker Memory is the persistent form of identification across meetings.

How accurate is diarization today?

Modern systems achieve well over 90% accuracy under good conditions. CraftNote reached 94.92% across six different audio types in benchmark testing. Accuracy degrades with heavy overlap, poor audio quality, and extreme similarity between voices.

Can diarization handle accents?

Yes. Modern embedding models are trained on multilingual, multi-accent datasets. Accents actually help differentiate speakers by adding distinctive patterns to the voice profile.

How many speakers can be diarized reliably?

Most systems handle six to ten speakers in a single meeting without issue. CraftNote's Speaker Memory can store profiles for hundreds of individuals across your entire meeting history.

Is voice data stored securely?

CraftNote stores voice profiles with AES-256 encryption on EU servers in Frankfurt. Voice data is used only for speaker recognition, never shared externally, and never used to train AI models.

Experience Diarization That Actually Works

94.92% transcription accuracy, persistent Speaker Memory across every meeting, 100+ languages, offline support, and GDPR-compliant EU data storage. Download CraftNote and stop labeling speakers by hand.